Artificial inteligence

The history of artificial intelligence:

The idea of “artificial intelligence” goes back thousands of years, to ancient philosophers considering questions of life and death. In ancient times, inventors made things called “automatons” which were mechanical and moved independently of human intervention. The word “automaton” comes from ancient Greek, and means “acting of one’s own will.” One of the earliest records of an automaton comes from 400 BCE and refers to a mechanical pigeon created by a friend of the philosopher Plato. Many years later, one of the most famous automatons was created by Leonardo da Vinci around the year 1495.

So while the idea of a machine being able to function on its own is ancient, for the purposes of this article, we’re going to focus on the 20th century, when engineers and scientists began to make strides toward our modern-day AI.

Groundwork for AI:

1900-1950In the early 1900s, there was a lot of media created that centered around the idea of artificial humans. So much so that scientists of all sorts started asking the question: is it possible to create an artificial brain? Some creators even made some versions of what we now call “robots” (and the word was coined in a Czech play in 1921) though most of them were relatively simple. These were steam-powered for the most part, and some could make facial expressions and even walk.

Dates of note:

- 1921: Czech playwright Karel Čapek released a science fiction play “Rossum’s Universal Robots” which introduced the idea of “artificial people” which he named robots. This was the first known use of the word.

- 1929: Japanese professor Makoto Nishimura built the first Japanese robot, named Gakutensoku.

- 1949: Computer scientist Edmund Callis Berkley published the book “Giant Brains, or Machines that Think” which compared the newer models of computers to human brains.

STARTUP (Birth of AI: 1950-1956)

This range of time was when the interest in AI really came to a head. Alan Turing published his work “Computer Machinery and Intelligence” which eventually became The Turing Test, which experts used to measure computer intelligence. The term “artificial intelligence” was coined and came into popular use.

Dates of note:

- 1950: Alan Turing published “Computer Machinery and Intelligence” which proposed a test of machine intelligence called The Imitation Game.

- 1952: A computer scientist named Arthur Samuel developed a program to play checkers, which is the first to ever learn the game independently.

- 1955: John McCarthy held a workshop at Dartmouth on “artificial intelligence” which is the first use of the word, and how it came into popular usage.

What is Artificial Intelligence?

Let us understand what is AI in the first place. From a birds eye view, AI provides a computer program the ability to think and learn on its own. It is a simulation of human intelligence (hence, artificial) into machines to do things that we would normally rely on humans. There are three main types of AI based on its capabilities – weak AI, strong AI, and super AI.

- Weak AI – Focuses on one task and cannot perform beyond its limitations (common in our daily lives)

- Strong AI – Can understand and learn any intellectual task that a human being can (researchers are striving to reach strong AI)

- Super AI – Surpasses human intelligence and can perform any task better than a human (still a concept)

Advantages and Disadvantages of Artificial Intelligence

An artificial intelligence program is a program that is capable of learning and thinking. It is possible to consider anything to be artificial intelligence if it consists of a program performing a task that we would normally assume a human would perform. Let’s begin with the advantages of artificial intelligence.

Advantages of Artificial Intelligence

1. Reduction in Human Error

One of the biggest advantages of Artificial Intelligence is that it can significantly reduce errors and increase accuracy and precision. The decisions taken by AI in every step is decided by information previously gathered and a certain set of algorithms. When programmed properly, these errors can be reduced to null.

Example:

An example of the reduction in human error through AI is the use of robotic surgery systems, which can perform complex procedures with precision and accuracy, reducing the risk of human error and improving patient safety in healthcare.

2. Zero Risks

Another big advantage of AI is that humans can overcome many risks by letting AI robots do them for us. Whether it be defusing a bomb, going to space, exploring the deepest parts of oceans, machines with metal bodies are resistant in nature and can survive unfriendly atmospheres. Moreover, they can provide accurate work with greater responsibility and not wear out easily.

Example:

One example of zero risks is a fully automated production line in a manufacturing facility. Robots perform all tasks, eliminating the risk of human error and injury in hazardous environments.

3. 24×7 Availability

There are many studies that show humans are productive only about 3 to 4 hours in a day. Humans also need breaks and time offs to balance their work life and personal life. But AI can work endlessly without breaks. They think much faster than humans and perform multiple tasks at a time with accurate results. They can even handle tedious repetitive jobs easily with the help of AI algorithms.

Example:

An example of this is online customer support chatbots, which can provide instant assistance to customers anytime, anywhere. Using AI and natural language processing, chatbots can answer common questions, resolve issues, and escalate complex problems to human agents, ensuring seamless customer service around the clock.

Want to Get Paid The Big Bucks?! Join AI & ML

Professional Certificate Program in AI and MLEXPLORE PROGRAM

4. Digital Assistance

Some of the most technologically advanced companies engage with users using digital assistants, which eliminates the need for human personnel. Many websites utilize digital assistants to deliver user-requested content. We can discuss our search with them in conversation. Some chatbots are built in a way that makes it difficult to tell whether we are conversing with a human or a chatbot.

Example:

We all know that businesses have a customer service crew that must address the doubts and concerns of the patrons. Businesses can create a chatbot or voice bot that can answer all of their clients’ questions using AI.

5. New Inventions

In practically every field, AI is the driving force behind numerous innovations that will aid humans in resolving the majority of challenging issues.

For instance, recent advances in AI-based technologies have allowed doctors to detect breast cancer in a woman at an earlier stage.

Example:

Another example of new inventions is self-driving cars, which use a combination of cameras, sensors, and AI algorithms to navigate roads and traffic without human intervention. Self-driving cars have the potential to improve road safety, reduce traffic congestion, and increase accessibility for people with disabilities or limited mobility. They are being developed by various companies, including Tesla, Google, and Uber, and are expected to revolutionize transportation.

6. Unbiased Decisions

Human beings are driven by emotions, whether we like it or not. AI on the other hand, is devoid of emotions and highly practical and rational in its approach. A huge advantage of Artificial Intelligence is that it doesn’t have any biased views, which ensures more accurate decision-making.

Example:

An example of this is AI-powered recruitment systems that screen job applicants based on skills and qualifications rather than demographics. This helps eliminate bias in the hiring process, leading to an inclusive and more diverse workforce.

7. Perform Repetitive Jobs

We will be doing a lot of repetitive tasks as part of our daily work, such as checking documents for flaws and mailing thank-you notes, among other things. We may use artificial intelligence to efficiently automate these menial chores and even eliminate “boring” tasks for people, allowing them to focus on being more creative.

Example:

An example of this is using robots in manufacturing assembly lines, which can handle repetitive tasks such as welding, painting, and packaging with high accuracy and speed, reducing costs and improving efficiency.

8. Daily Applications

Today, our everyday lives are entirely dependent on mobile devices and the internet. We utilize a variety of apps, including Google Maps, Alexa, Siri, Cortana on Windows, OK Google, taking selfies, making calls, responding to emails, etc. With the use of various AI-based techniques, we can also anticipate today’s weather and the days ahead.

Example:

About 20 years ago, you must have asked someone who had already been there for instructions when you were planning a trip. All you need to do now is ask Google where Bangalore is. The best route between you and Bangalore will be displayed, along with Bangalore’s location, on a Google map.

9. AI in Risky Situations

One of the main benefits of artificial intelligence is this. By creating an AI robot that can perform perilous tasks on our behalf, we can get beyond many of the dangerous restrictions that humans face. It can be utilized effectively in any type of natural or man-made calamity, whether it be going to Mars, defusing a bomb, exploring the deepest regions of the oceans, or mining for coal and oil.

Example:

For instance, the explosion at the Chernobyl nuclear power facility in Ukraine. As any person who came close to the core would have perished in a matter of minutes, at the time, there were no AI-powered robots that could assist us in reducing the effects of radiation by controlling the fire in its early phases.

10. Faster Decision-making

Faster decision-making is another benefit of AI. By automating certain tasks and providing real-time insights, AI can help organizations make faster and more informed decisions. This can be particularly valuable in high-stakes environments, where decisions must be made quickly and accurately to prevent costly errors or save lives.

Example:

An example of faster decision-making is using AI-powered predictive analytics in financial trading, where algorithms can analyze vast amounts of data in real time and make informed investment decisions faster than human traders, resulting in improved returns and reduced risks.

11. Pattern Identification

Pattern identification is another area where AI excels. With its ability to analyze vast amounts of data and identify patterns and trends, AI can help businesses and organizations better understand customer behavior, market trends, and other important factors. This information can be used to make better decisions and improve business outcomes.

Example:

An example of pattern identification is the use of AI in fraud detection, where machine learning algorithms can identify patterns and anomalies in transaction data to detect and prevent fraudulent activity, improving security and reducing financial losses for individuals and organizations.

12. Medical Applications

AI has also made significant contributions to the field of medicine, with applications ranging from diagnosis and treatment to drug discovery and clinical trials. AI-powered tools can help doctors and researchers analyze patient data, identify potential health risks, and develop personalized treatment plans. This can lead to better health outcomes for patients and help accelerate the development of new medical treatments and technologies.

Disadvantages of Artificial Intelligence

1. High Costs

The ability to create a machine that can simulate human intelligence is no small feat. It requires plenty of time and resources and can cost a huge deal of money. AI also needs to operate on the latest hardware and software to stay updated and meet the latest requirements, thus making it quite costly.

2. No Creativity

A big disadvantage of AI is that it cannot learn to think outside the box. AI is capable of learning over time with pre-fed data and past experiences, but cannot be creative in its approach. A classic example is the bot Quill who can write Forbes earning reports. These reports only contain data and facts already provided to the bot. Although it is impressive that a bot can write an article on its own, it lacks the human touch present in other Forbes articles.

3. Unemployment

One application of artificial intelligence is a robot, which is displacing occupations and increasing unemployment (in a few cases). Therefore, some claim that there is always a chance of unemployment as a result of chatbots and robots replacing humans.

For instance, robots are frequently utilized to replace human resources in manufacturing businesses in some more technologically advanced nations like Japan. This is not always the case, though, as it creates additional opportunities for humans to work while also replacing humans in order to increase efficiency.

4. Make Humans Lazy

AI applications automate the majority of tedious and repetitive tasks. Since we do not have to memorize things or solve puzzles to get the job done, we tend to use our brains less and less. This addiction to AI can cause problems to future generations.

5. No Ethics

Ethics and morality are important human features that can be difficult to incorporate into an AI. The rapid progress of AI has raised a number of concerns that one day, AI will grow uncontrollably, and eventually wipe out humanity. This moment is referred to as the AI singularity.

6. Emotionless

Since early childhood, we have been taught that neither computers nor other machines have feelings. Humans function as a team, and team management is essential for achieving goals. However, there is no denying that robots are superior to humans when functioning effectively, but it is also true that human connections, which form the basis of teams, cannot be replaced by computers.

7. No Improvement

Humans cannot develop artificial intelligence because it is a technology based on pre-loaded facts and experience. AI is proficient at repeatedly carrying out the same task, but if we want any adjustments or improvements, we must manually alter the codes. AI cannot be accessed and utilized akin to human intelligence, but it can store infinite data.

Machines can only complete tasks they have been developed or programmed for; if they are asked to complete anything else, they frequently fail or provide useless results, which can have significant negative effects. Thus, we are unable to make anything conventional.

Advantages and Disadvantages of AI in Different Sectors and Industries

Advantages and Disadvantages of AI |

Advantages of AI |

Disadvantages of AI |

|

AI in Healthcare |

The ability to enhance diagnosis and treatment. AI algorithms can analyze large volumes of medical data, including patient records, lab results, and medical images, to assist healthcare professionals in making accurate and timely diagnosis. AI can identify patterns and anomalies that may be difficult for human clinicians to detect, leading to earlier detection of diseases and improved treatment outcomes. |

A disadvantage of AI in healthcare is the potential for ethical and privacy concerns. AI systems in healthcare rely heavily on patient data, including sensitive medical information. There is a need to ensure that this data is collected, stored, and used in a secure and privacy-conscious manner. Protecting patient privacy, maintaining data confidentiality, and preventing unauthorized access to personal health information are critical considerations. |

|

AI in Marketing |

The ability to enhance targeting and personalization of marketing campaigns. AI algorithms can analyze vast amounts of customer data, including demographics, preferences, browsing behavior, and purchase history, to segment audiences and deliver highly targeted and personalized marketing messages. By leveraging AI, marketers can tailor their campaigns to specific customer segments, increasing the relevance and effectiveness of their marketing efforts. This level of targeting and personalization can lead to higher conversion rates, improved customer satisfaction, and increased return on investment (ROI) for marketing campaigns. |

A disadvantage of AI in marketing is the potential lack of human touch and creativity. While AI can automate various marketing tasks and generate data-driven insights, it may struggle to replicate the unique human elements of marketing, such as emotional connection, intuition, and creative thinking. AI algorithms may rely solely on data and predefined patterns, potentially missing out on innovative or out-of-the-box marketing approaches that require human creativity and intuition. |

|

AI in Education |

The ability to provide personalized learning experiences. AI-powered systems can analyze vast amounts of data on student performance, preferences, and learning styles to create tailored educational content and adaptive learning paths. This personalization allows students to learn at their own pace, focus on areas where they need more support, and engage with content that is relevant and interesting to them. |

A disadvantage of AI in education is the potential for ethical and privacy concerns. AI systems collect and analyze a significant amount of data on students, including their performance, behavior, and personal information. There is a need to ensure that this data is handled securely, with appropriate privacy safeguards in place. |

|

AI in Creativity |

One advantage of AI in creativity is its ability to augment human creativity and provide new avenues for artistic expression. AI technologies, such as generative models and machine learning algorithms, can assist artists, musicians, and writers in generating novel ideas, exploring new artistic styles, and pushing the boundaries of traditional creative processes. |

A disadvantage of AI in creativity is the potential lack of originality and authenticity in AI-generated creative works. While AI systems can mimic existing styles and patterns, there is an ongoing debate about whether AI can truly possess creativity in the same sense as humans. AI-generated works may lack the depth, emotional connection, and unique perspectives that come from human experiences and emotions. |

|

AI in Transportation |

One advantage of AI in transportation is the potential to enhance safety and efficiency on roads and in various modes of transportation. AI-powered systems can analyze real-time data from sensors, cameras, and other sources to make quick and informed decisions. This can enable features such as advanced driver assistance systems (ADAS) and autonomous vehicles, which can help reduce human error and accidents. AI can also optimize traffic flow, improve route planning, and enable predictive maintenance of vehicles, leading to more efficient transportation networks and reduced congestion. |

A disadvantage of AI in transportation is the ethical and legal challenges it presents. Autonomous vehicles, for example, raise questions about liability in the event of accidents. Determining who is responsible when an AI-controlled vehicle is involved in a collision can be complex. Additionally, decisions made by AI systems, such as those related to traffic management or accident avoidance, may need to consider ethical considerations, such as the allocation of limited resources or the protection of passengers versus pedestrians. Balancing these ethical dilemmas and developing appropriate regulations and guidelines for AI in transportation is a complex and ongoing challenge. |

Applications of Artificial Intelligence

Healthcare AI

The healthcare industry is set to benefit enormously from artificial intelligence.

AI can improve patient outcomes, reduce medical errors, and increase efficiency.

Here are some applications of AI in healthcare:

✓ Diagnosis and treatment: Predictive models can identify the likelihood of a patient developing a particular disease or condition. Then, a personalized treatment plan can be developed based on a patient’s medical history and current health status.

✓ Medical imaging: Medical images, such as X-rays and MRI scans, can be analyzed by AI-powered software to identify abnormalities and make accurate diagnoses.

✓ Robot-assisted surgery: Surgical robots and tools used during surgery, can help to increase accuracy and reduce the risk of surgeon error.

✓ Home Health AI: Health trackers, safety devices, and mental health tools can use AI to improve the quality of life of seniors and the elderly.

Artificial Intelligence in Finance

AI has been widely used to analyze financial data, detect fraud, and develop investment strategies.

✓ Fraud detection: Predictive models can immediately detect and flag deceitful transactions.

✓ Trading: Trading algorithms have long been used to analyze large amounts of financial data to make buy and sell decisions.

✓ Investment management: Many apps offer AI investment portfolios for clients based on their risk tolerance, financial goals, and investment preferences.

AI in Transportation

Artificial Intelligence has revolutionized the transportation industry by enhancing safety, improving efficiency, and reducing costs.

Here are some applications of AI in transportation:

✓ Autonomous vehicles: Self-driving vehicles have the potential to improve road safety and reduce traffic congestion.

✓ Predictive maintenance: AI can be integrated into vehicles to anticipate problems with equipment and proactively schedule maintenance before breakdowns occur, reducing downtime and costs.

✓ Traffic management: Traffic flow and congestion can be improved by AI-operated traffic lights that change traffic patterns in real time.

Applications of AI in Education

Personalized learning, streamlining administrative tasks, and enhancing the overall learning experience are just a handful of ways AI will transform education.

✓ Personalized learning: Adaptive learning systems can adjust to students’ unique learning needs and abilities.

✓ Student assessment: AI tools like ChatGPT can automate the grading of tests and essays, providing immediate feedback to students and reducing the workload for teachers.

✓ Administrative tasks: Tedious scheduling and record-keeping done by teachers can be automated.

Artificial Intelligence in Retail

AI is already used in the retail industry to improve customer experiences, optimize inventory management, and, as a result, boost sales.

Here are some applications of AI in retail:

✓ Personalized shopping: Recommending products based on a customer’s purchase history and preferences.

✓ Inventory management: Predict demand to inform buying quantities and reduce waste.

✓ Fraud detection: Preemptively detect scam transactions and prevent theft.

AI in Manufacturing

AI is used to improve efficiency, reduce waste, and optimize manufacturing processes.

✓ Quality control: AI has been used to improve quality control by detecting defects and identifying areas for improvement.

✓Supply chain optimization: AI is perfect for evaluating a manufacturer’s supply chain to predict demand and identify weak points.

Applications of AI in Agriculture

In agriculture, AI can enhance productivity, reduce waste, and increase yields.

✓ Crop monitoring: Track crop development and detect any diseases or pest issues early.

✓ Precision farming: Optimizing water and fertilizer usage and reducing waste.

✓ Harvest forecasting: Forecast harvest yields, allowing farmers to optimize their planting and workforce planning.

Energy Sector AI

AI can optimize energy production, reduce waste, and improve energy efficiency in a variety of subsectors like fossil fuels, renewables, and solar.

✓ Energy grid management: Manage the energy grid, predicting energy demand and reducing waste.

✓ Renewable energy: Optimize the production and storage of renewable energy, improving efficiency and reducing waste.

Future of Artificial Intelligence

AI has already made its mark on society, from chatbots to ever-improving self-driving vehicles.

However, with this potential comes significant risks and challenges, such as ensuring that AI is developed and used responsibly and ethically.

AI Accessibility: More Available and Ubiquitous

The democratization of AI is already underway, and its accessibility will only increase in the coming years.

Cloud computing has made it easier and cheaper to access AI services and tools, with companies like Amazon, Google, and Microsoft offering AI-as-a-service.

Open-source platforms like TensorFlow and PyTorch have also made it easier for developers to create and deploy AI models.

One area where AI accessibility is rapidly growing is in the healthcare industry.

Medical institutions are using AI to help diagnose diseases and develop personalized treatments.

For example, PathAI is using AI to improve the accuracy of cancer diagnosis, while Nanox is developing AI-based tools to help radiologists analyze medical images.

AI Augmentation: Enhancing Human Capabilities and Collaboration

AI will not replace humans, but rather, it will shift the market’s priorities.

According to a report by McKinsey, by 2030, as many as 400 million workers could have to find different jobs as AI-driven automation disrupts the workforce.

AI will enable humans to collaborate with other humans and machines across borders and disciplines.

For example, AI-powered translation tools are already expanding the scope of where companies can do business worldwide.

AI Opportunities and Challenges: New Markets, Products, Services, and Jobs

The widespread adoption of AI will create new markets, products, services, and jobs. According to a report by PwC, AI is expected to contribute up to $15.7 trillion to the global economy by 2030.

However, AI will pose new ethical, social, and legal issues.

For example, AI algorithms can perpetuate bias and discrimination, as seen in the case of facial recognition technology.

We need to ensure that AI is aligned with our values and goals and benefits everyone equally.

While some jobs may be automated, new jobs will emerge that require AI-related skills.

For example, data scientists, AI ethicists, and explainability specialists will be in high demand.

According to the World Economic Forum, by 2025, the emerging professions of data scientists, machine learning specialists, and robotics engineers will be in high demand across industries.

7 TYPES OF ARTIFICIAL INTELLIGENCE

- Artificial Narrow Intelligence: AI designed to complete very specific actions; unable to independently learn.

- Artificial General Intelligence: AI designed to learn, think and perform at similar levels to humans.

- Artificial Superintelligence: AI able to surpass the knowledge and capabilities of humans.

- Reactive Machines: AI capable of responding to external stimuli in real time; unable to build memory or store information for future.

- Limited Memory: AI that can store knowledge and use it to learn and train for future tasks.

- Theory of Mind: AI that can sense and respond to human emotions, plus perform the tasks of limited memory machines.

- Self-aware: AI that can recognize others’ emotions, plus has sense of self and human-level intelligence; the final stage of AI.

Based on how they learn and how far they can apply their knowledge, all AI can be broken down into three capability types: Artificial narrow intelligence, artificial general intelligence and artificial superintelligence. Here’s what to know about each.

1. ARTIFICIAL NARROW INTELLIGENCE

Artificial narrow intelligence (ANI), also known as narrow AI or weak AI, describes AI tools designed to carry out very specific actions or commands. ANI technologies are built to serve and excel in one cognitive capability, and cannot independently learn skills beyond its design. They often utilize machine learning and neural network algorithms to complete these specified tasks.

For instance, natural language processing AI is a type of narrow intelligence because it can recognize and respond to voice commands, but cannot perform other tasks beyond that.

Some examples of artificial narrow intelligence include image recognition software, self-driving cars and AI virtual assistants like Siri.

2. ARTIFICIAL GENERAL INTELLIGENCE

Artificial general intelligence (AGI), also called general AI or strong AI, describes AI that can learn, think and perform a wide range of actions similarly to humans. The goal of designing artificial general intelligence is to be able to create machines that are capable of performing multifunctional tasks and act as lifelike, equally intelligent assistants to humans in everyday life.

Though still a work in progress, the groundwork of artificial general intelligence could be built from technologies such as supercomputers, quantum hardware and generative AI models like ChatGPT.

3. ARTIFICIAL SUPERINTELLIGENCE

Artificial superintelligence (ASI), or super AI, is the stuff of science fiction. It’s theorized that once AI has reached the general intelligence level, it will soon learn at such a fast rate that its knowledge and capabilities will become stronger than that even of humankind.

ASI would act as the backbone technology of completely self-aware AI and other individualistic robots. Its concept is also what fuels the popular media trope of “AI takeovers,” as seen in films like Ex Machina or I, Robot. But at this point, it’s all speculation.

“Artificial superintelligence will become by far the most capable forms of intelligence on earth,” Said David Rogenmoser, CEO of AI writing company Jasper. “It will have the intelligence of human beings and will be exceedingly better at everything that we do.”

Functionality-Based Types of Artificial Intelligence

Functionality concerns how an AI applies its learning capabilities to process data, respond to stimuli and interact with its environment. As such, AI can be sorted by four functionality types.

4. REACTIVE MACHINES

The genesis of AI began with the development of reactive machines, the most fundamental type of AI. Reactive machines are just that — reactionary. They can respond to immediate requests and tasks, but they aren’t capable of storing memory or learning from past experiences.

“They cannot improve their functionality through experience, and can only respond to a limited combination of inputs.”

In practice, reactive machines can read and respond to external stimuli in real time. This makes them useful for performing basic autonomous functions, such as filtering spam from your email inbox or recommending movies based on your most recent Netflix searches.

Most famously, IBM’s reactive AI machine Deep Blue was able to read real-time cues in order to beat Russian chess grandmaster Garry Kasparov in a 1997 chess match. But beyond that, reactive AI can’t build upon previous knowledge or perform more complex tasks. In order to apply AI in more advanced scenarios, developments in data storage and memory management needed to occur.

5. LIMITED MEMORY

The next step in AI’s evolution is developing a capacity for storing knowledge. But it would be nearly three decades before that breakthrough was reached, according to Rafael Tena, senior AI researcher at insurance company Acrisure Technology Group.

“All present-day AI systems are trained by large volumes of training data that they store in their memory to form a reference model for solving future problems.”

“There was a huge amount of progress in the 80s,” Tena said. But that eventually slowed. “There were small incremental changes …until deep learning came around.”

In 2012, the field of AI made major progress. New innovations from Google and Image Net made it possible for artificial intelligence to store past data and make predictions using it. This type of AI is referred to as limited memory AI, because it can build its own limited knowledge base and use that knowledge to improve over time. Today, the limited memory model represents the majority of AI applications.

“Nearly all existing applications that we know of come under this category of AI,” Rogenmoser said. “All present-day AI systems are trained by large volumes of training data that they store in their memory to form a reference model for solving future problems.”

Limited memory AI can be applied in a broad range of scenarios, from smaller scale applications, such as chatbots, to self-driving cars and other advanced use cases.

In terms of AI’s progress, limited memory technology is the furthest we’ve come — but it’s not the final destination. Limited memory machines can learn from past experiences and store knowledge, but they can’t pick up on subtle environmental changes, emotional cues or reach the same level of human intelligence.

“Current models have a one-way relationship,” Rogenmoser said. “AI [tools] like Alexa and Siri don’t react with any emotional support when you yell at them.”

The concept of AI that can perceive and pick up on the emotions of others hasn’t been fully realized yet. This concept is referred to as “theory of mind,” a term borrowed from psychology that describes humans’ ability to read the emotions of others and predict future actions based on that information.

“Machines may work better than us 90 percent of the time, but that last ten percent, what you would describe as common sense, is really hard to get to.”

Tena provided an example to illustrate how a successful theory of mind application would revolutionize the technology: A self-driving car may perform better than a human driver the majority of the time because it won’t make the same human errors. But if you, as a driver, know that your neighbor’s kid tends to play close to the street after school, you’ll know instinctively to slow down while passing that neighbor’s driveway — something an AI vehicle equipped with basic limited memory wouldn’t be able to do.

Theory of mind could bring plenty of positive changes to the tech world, but it also poses its own risks. Since emotional cues are so nuanced, it would take a long time for AI machines to perfect reading them, and could potentially make big errors while in the learning stage. Some people also fear that once technologies are able to respond to emotional signals as well as situational ones, the result could mean automation of some jobs. But no need to worry just yet —

Rogenmoser said that this hypothetical future, however, is still very far off.

“Right now, this intelligence is science fiction,” he said. “We’re not even close to developing this type of AI, so no one is getting their job stolen by AI.”

7. SELF-AWARE

The stage beyond theory of mind, when artificial intelligence develops self awareness, is referred to as the AI point of singularity. It’s thought that once that point is reached, AI machines will be beyond our control, because they’ll not only be able to sense the feelings of others, but will have a sense of self as well.

“People both strive to create this type of AI and fear the consequences of its creation, worrying that this type of AI could steal our jobs or take over our world,” Rogenmoser said. “If this type of AI is successfully created, no one knows what the impact will be.”

“If this type of AI is successfully created, no one knows what the impact will be.”

Steps are being taken by researchers and engineers to develop rudimentary versions of self-aware AI. Perhaps one of the most famous of these is Sophia, a robot developed by robotics company Hanson Robotics.

While not technically self aware, Sophia’s advanced application of current AI technologies provides a glimpse of AI’s potentially self-aware future. It’s a future of promise as well as danger — and there’s debate about whether it’s ethical to build sentient AI at all.

But for now, Rogenmoser said we don’t need to worry about AI conquering the world.

“AI is going to become much better at solving real use cases, but I want to express that I don’t think this [means] the end of humans and the end of work,” he said. “We will continue to see AI pop up in useful ways to amplify the great work that people are already doing.”

Strong AI Vs. Weak AI

Intelligence is tricky to define, which is why AI experts typically distinguish between strong AI and weak AI.

Strong AI

Strong AI, also known as artificial general intelligence, is a machine that can solve problems it’s never been trained to work on — much like a human can. This is the kind of AI we see in movies, like the robots from Westworld or the character Data from Star Trek: The Next Generation. This type of AI doesn’t actually exist yet.

The creation of a machine with human-level intelligence that can be applied to any task is the Holy Grail for many AI researchers, but the quest for artificial general intelligence has been fraught with difficulty. And some believe strong AI research should be limited, due to the potential risks of creating a powerful AI without appropriate guardrails.

In contrast to weak AI, strong AI represents a machine with a full set of cognitive abilities — and an equally wide array of use cases — but time hasn’t eased the difficulty of achieving such a feat.

Weak AI

Weak AI, sometimes referred to as narrow AI or specialized AI, operates within a limited context and is a simulation of human intelligence applied to a narrowly defined problem (like driving a car, transcribing human speech or curating content on a website).

Weak AI is often focused on performing a single task extremely well. While these machines may seem intelligent, they operate under far more constraints and limitations than even the most basic human intelligence.

Weak AI examples include:

- Siri, Alexa and other smart assistants

- Self-driving cars

- Google search

- Conversational bots

- Email spam filters

- Netflix’s recommendations

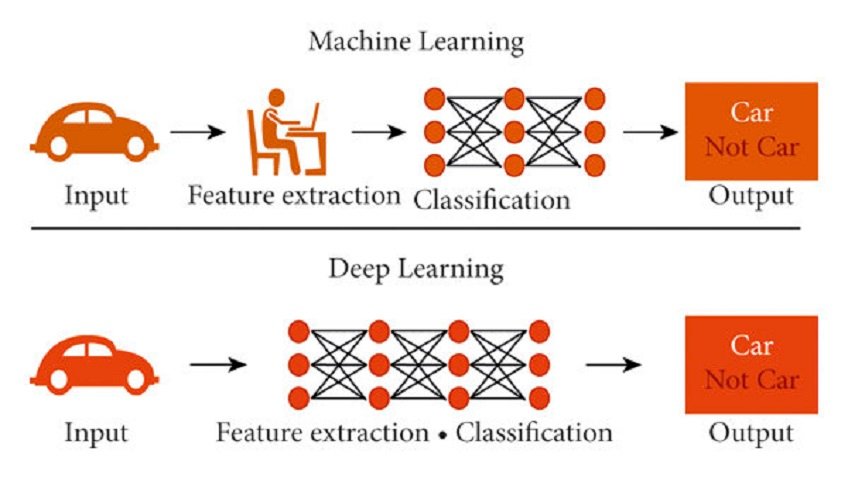

Machine Learning Vs. Deep Learning

Although the terms “machine learning” and “deep learning” come up frequently in conversations about AI, they should not be used interchangeably. Deep learning is a form of machine learning, and machine learning is a subfield of artificial intelligence.

Machine Learning

A machine learning algorithm is fed data by a computer and uses statistical techniques to help it “learn” how to get progressively better at a task, without necessarily having been specifically programmed for that task. Instead, ML algorithms use historical data as input to predict new output values. To that end, ML consists of both supervised learning (where the expected output for the input is known thanks to labeled data sets) and unsupervised learning (where the expected outputs are unknown due to the use of unlabeled data sets).

Deep Learning

Deep learning is a type of machine learning that runs inputs through a biologically inspired neural network architecture. The neural networks contain a number of hidden layers through which the data is processed, allowing the machine to go “deep” in its learning, making connections and weighting input for the best results.